Why Your Team Isn't Seeing AI Benefits (And It's Not the Tools)

- 6 minutes - Feb 16, 2026

- #ai#teams#productivity#adoption#developer-experience

You rolled out AI coding tools. You got licenses, ran the demos, and encouraged the team to try them. Months later, the feedback is lukewarm: “We use it sometimes.” “It’s okay for small stuff.” “I’m not sure it’s actually faster.” Nobody’s seeing the dramatic productivity gains the vendor promised.

If this sounds familiar, you’re not alone. Research shows that while 84% of developers use or plan to use AI tools, only 55% find them highly effective—and trust in AI output has dropped sharply. Adoption doesn’t equal impact. The gap between “we have AI” and “AI is helping us ship better, faster” is where most teams get stuck.

The good news: the problem usually isn’t the tools. It’s how and where they’re being used, how you’re measuring success, and what you’re doing (or not doing) to create conditions where benefits can actually show up. Here’s a practical way to diagnose what’s going wrong and what to change.

1. You’re Measuring the Wrong Things

Teams that “don’t see benefits” often haven’t defined what benefit looks like. If success is vague (“everyone should use AI”), you’ll never know if you’re there.

What usually happens: You track adoption (who’s using Copilot or Cursor) or activity (suggestions accepted, chats per day). Those go up. But cycle time, bug escape rate, and time-to-resolution stay the same—or get worse because review and verification are now the bottleneck. So the team rationally concludes: “We’re doing more AI, but we’re not actually better off.”

What to do: Define outcome metrics before pushing adoption. Pick 2–3 that matter: e.g. time from commit to production, incident resolution time, or developer satisfaction. Measure them before and after, and compare teams or time periods that use AI heavily vs. lightly. If you don’t see improvement on outcomes, the next step isn’t “use AI more”—it’s “use AI differently” (see below).

2. AI Is Being Used on the Wrong Tasks

AI helps a lot for some tasks and little or not at all for others. If your team is using it mainly for the wrong ones, they won’t see benefits—and may feel slower.

What usually happens: People use AI for everything: complex design, tricky bugs, boilerplate, and one-off scripts. The complex work gets worse (more back-and-forth, wrong abstractions, subtle bugs). The boilerplate gets better. Net effect feels like a wash or a slowdown, so the narrative becomes “AI doesn’t really help.”

What to do: Be explicit about where AI should and shouldn’t be the default. High-leverage, low-risk uses: documentation, repetitive refactors, tests for existing code, and well-scoped implementation tasks. Low-leverage or high-risk: architecture decisions, security-critical code, and anything that requires deep system context. Share this as a team so people don’t waste energy (and trust) on tasks where AI rarely wins.

3. The Verification Bottleneck Is Invisible

AI generates code quickly; humans still have to verify it. If that verification isn’t accounted for, “faster generation” turns into “more work” and no visible gain.

What usually happens: Developers accept more suggestions and ship more code, but they spend so much time reading, testing, and fixing AI output that end-to-end time doesn’t improve. Or quality drops and bugs increase, which then gets blamed on “AI code” rather than on missing process. Either way, the team doesn’t perceive a benefit.

What to do: Treat verification as part of the workflow, not an afterthought. Allocate time for review and testing of AI-generated code. Consider lighter review for low-risk areas and stricter review for critical paths. If you don’t, the bottleneck will stay hidden and benefits will stay invisible.

4. Trust Is Broken Before It’s Built

If people don’t trust the output, they’ll check everything—which cancels out speed gains. Or they’ll use AI only for throwaway work and conclude it’s “not for real code.”

What usually happens: Early experiences with wrong or insecure suggestions, or one bad production incident, make the team skeptical. They either over-verify (slow) or under-use (no impact). Surveys reflect this: trust in AI accuracy has fallen; only a small fraction of developers say they “highly trust” AI output.

What to do: Rebuild trust in small, observable steps. Start with high-trust, low-risk use cases (e.g. docs, tests, internal tools) where mistakes are cheap and wins are visible. Document what worked and what didn’t. Add guardrails (review rules, security checks) so that when AI is wrong, you catch it before it hurts. Trust grows when the team sees that using AI doesn’t mean lowering standards.

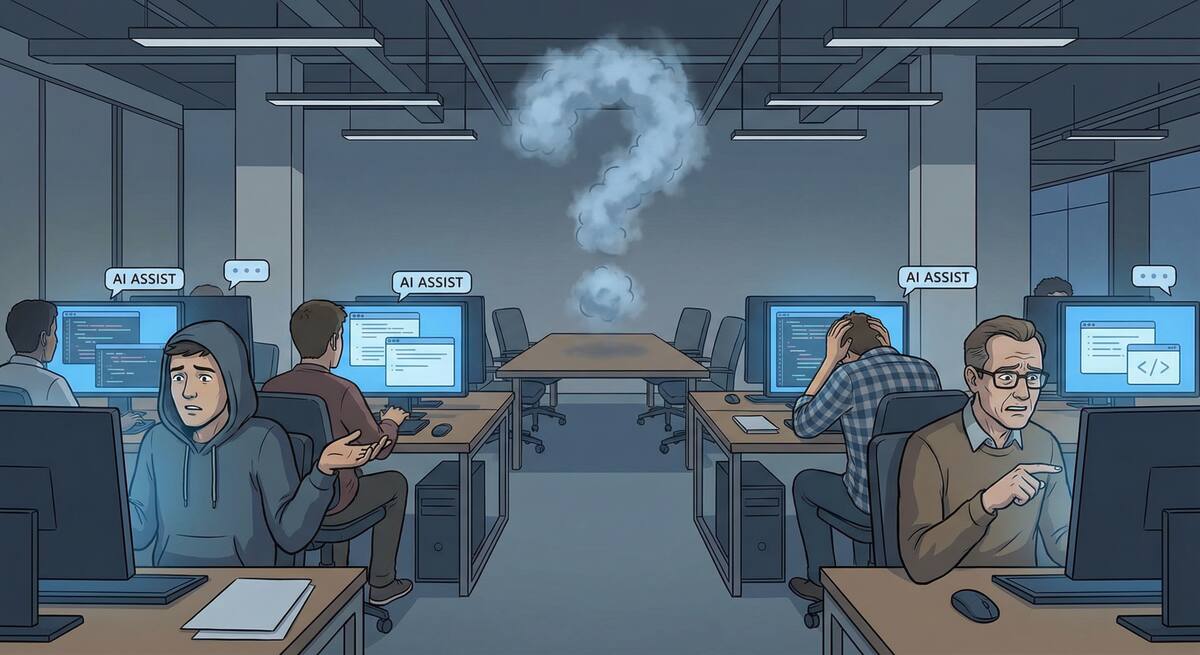

5. There’s No Shared Definition of “Better”

Sometimes the team is divided: some people feel faster, others feel slower. Without a shared definition of “better,” the overall story stays “we don’t see benefits.”

What usually happens: Senior devs on complex systems feel slowed down (context switching, reviewing plausible-but-wrong code). Junior devs or people doing more routine work feel sped up. If you only listen to one group or average everything, you miss the real picture.

What to do: Segment by role and task type. Survey or interview: “Where do you feel faster? Slower? Why?” Use that to steer AI toward the tasks and people where it actually helps, and stop pushing it where it doesn’t. One shared metric (e.g. cycle time) plus qualitative feedback by segment is enough to get a clear picture.

6. Culture and Incentives Work Against Experimentation

If “trying AI” feels risky (e.g. “if something breaks, it’s on me”) or unrewarded (“we’re judged on velocity, not on learning”), people won’t invest in using it well.

What usually happens: Teams are told to adopt AI but are still measured and rewarded for short-term output. Experimenting with new workflows or spending time on verification feels like a cost. So people either avoid AI or use it in the safest, least impactful way—and report “no real benefit.”

What to do: Explicitly make “figuring out where AI helps” part of the job. Protect time for experimentation and for improving review and verification. Celebrate and share wins (e.g. “this workflow is now 30% faster”) so that visible benefits get attributed to AI. Align incentives so that learning and quality are valued, not just raw throughput.

Putting It Together: A Simple Diagnostic

Ask your team (and yourself) these questions:

- Outcomes: Are we measuring the right things (cycle time, quality, satisfaction), and do we see movement on them when we use AI?

- Tasks: Are we using AI mainly for tasks where it tends to help (docs, tests, boilerplate, well-scoped implementation)?

- Verification: Do we account for review and testing time, and have we adjusted our process so that AI-generated code is verified appropriately?

- Trust: Have we built trust through low-risk wins and clear guardrails, or are we stuck in over-verification or under-use?

- Segmentation: Do we know who feels helped vs. hurt by AI, and are we doubling down on the right use cases?

- Culture: Do people feel safe and rewarded for experimenting with AI and improving how the team uses it?

If the answer to most of these is “no” or “we’re not sure,” the fix isn’t a different tool—it’s how you’re using the one you have, how you’re measuring, and how you’re supporting the team. Once you address that, you can have an honest conversation about whether you’re actually seeing AI performance benefits—and where to go next.