Why NIST's AI Agent Standards Initiative Matters Right Now

- 3 minutes - Mar 21, 2026

- #ai#nist#standards#mcp#enterprise

One of the most consequential AI stories this month is not a product launch. It is the NIST AI Agent Standards Initiative.

NIST launched the effort through its Center for AI Standards and Innovation to focus on security, interoperability, and identity for AI agents. The initiative is structured around three pillars: industry-led standards development, open protocol support, and security research. It already has concrete deadlines attached, including a March security request for input and an April identity concept paper.

That may sound like policy-adjacent background noise. It is not.

Standards Efforts Usually Show Up Late

In most technology cycles, standards bodies arrive after years of product chaos. By the time standards work gets serious, the market has usually produced enough confusion that large organizations start demanding a common language for risk, interoperability, and procurement.

That is where agent systems are arriving now.

The signal is not just that NIST is paying attention. The stronger signal is that AI agents have become important enough, risky enough, and operational enough that a standards initiative now feels necessary.

Why Engineering Teams Should Care

It is tempting to think this is mainly for security or legal teams. In practice, engineering organizations are often the first ones who will feel the consequences.

Standards shape:

- what shows up in enterprise RFPs

- what audit expectations become normal

- what identity and permission models vendors need to support

- which open protocols become safe enough to use broadly

If your team is adopting agentic tooling quickly, standards work is not abstract. It is an early preview of the constraints that will eventually land in procurement checklists, platform reviews, and enterprise architecture decisions.

MCP Is Part of This Story

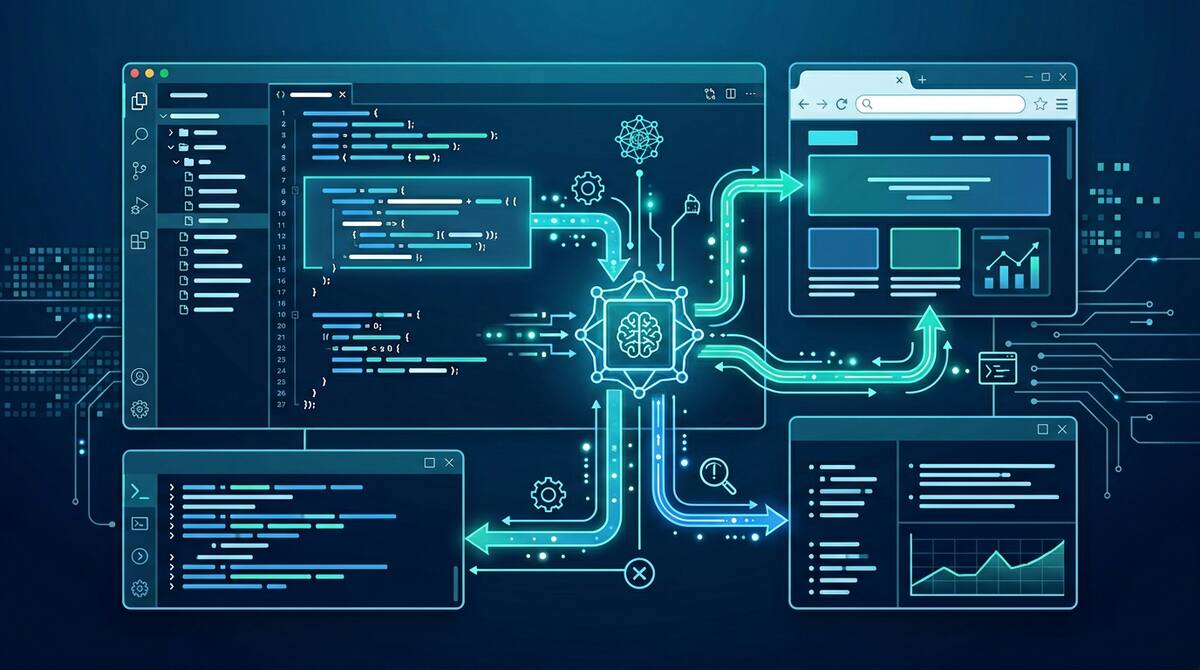

The initiative is especially relevant because MCP is emerging as a leading standard candidate for agent-to-tool connectivity. That means the standards conversation is not happening in a vacuum. It is directly tied to the protocol layer many teams are already starting to adopt through coding tools, IDEs, and platform workflows.

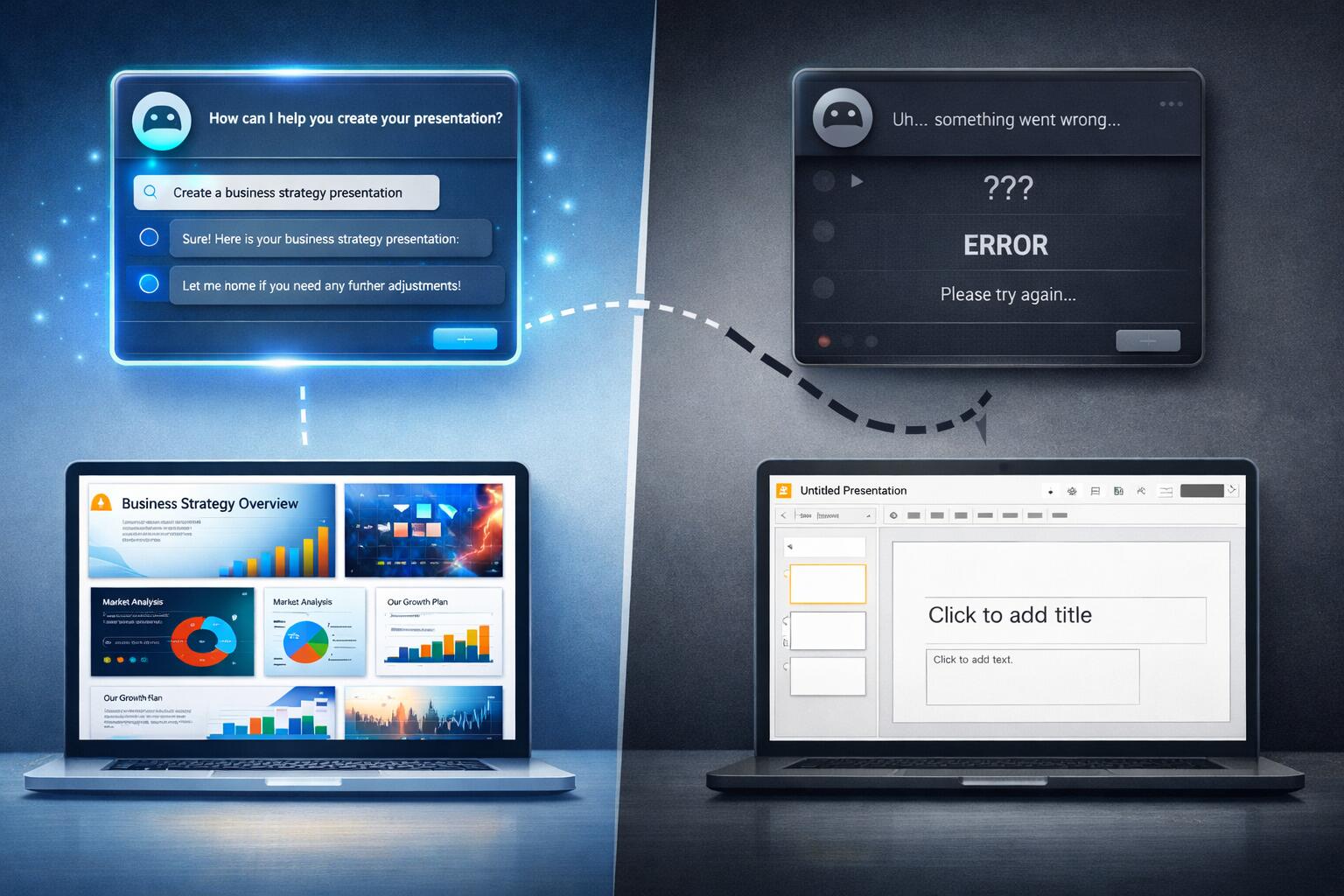

That is why the NIST move matters now rather than later. The tools are shipping first. The governance and security expectations are catching up. Teams that ignore the standards layer are effectively letting vendors make those decisions for them.

The Three Things to Watch

There are three especially important threads here:

Identity

Who is an agent acting as? A user? A service account? Something hybrid? Identity ambiguity is manageable in demos and painful in production.

Interoperability

If agents connect to tools through standards-based layers, portability improves, but so does the need for consistent behavior and security assumptions across implementations.

Security research

The more agents can do across enterprise systems, the less acceptable ad hoc security models become. Standards work is often where insecure defaults finally get named for what they are.

What This Means Practically

You do not need to become a standards expert to respond well. But you do need to understand that agentic development is leaving its purely experimental phase.

Practical next steps for teams:

- inventory where agentic tooling is already in use

- note which workflows depend on MCP or similar open connectivity layers

- start asking vendors harder questions about identity, auditability, and least privilege

- assume that enterprise requirements around agent governance are going to get stricter, not looser

The right time to think about standards is before your organization is forced to think about standards.

NIST’s initiative matters because it signals a transition point. AI agents are no longer just interesting tools. They are becoming infrastructure that organizations will expect to govern, compare, and buy against common expectations.