When AI Slows You Down: Picking the Right Tasks

- 5 minutes - Feb 21, 2026

- #ai#productivity#tasks#teams#workflow

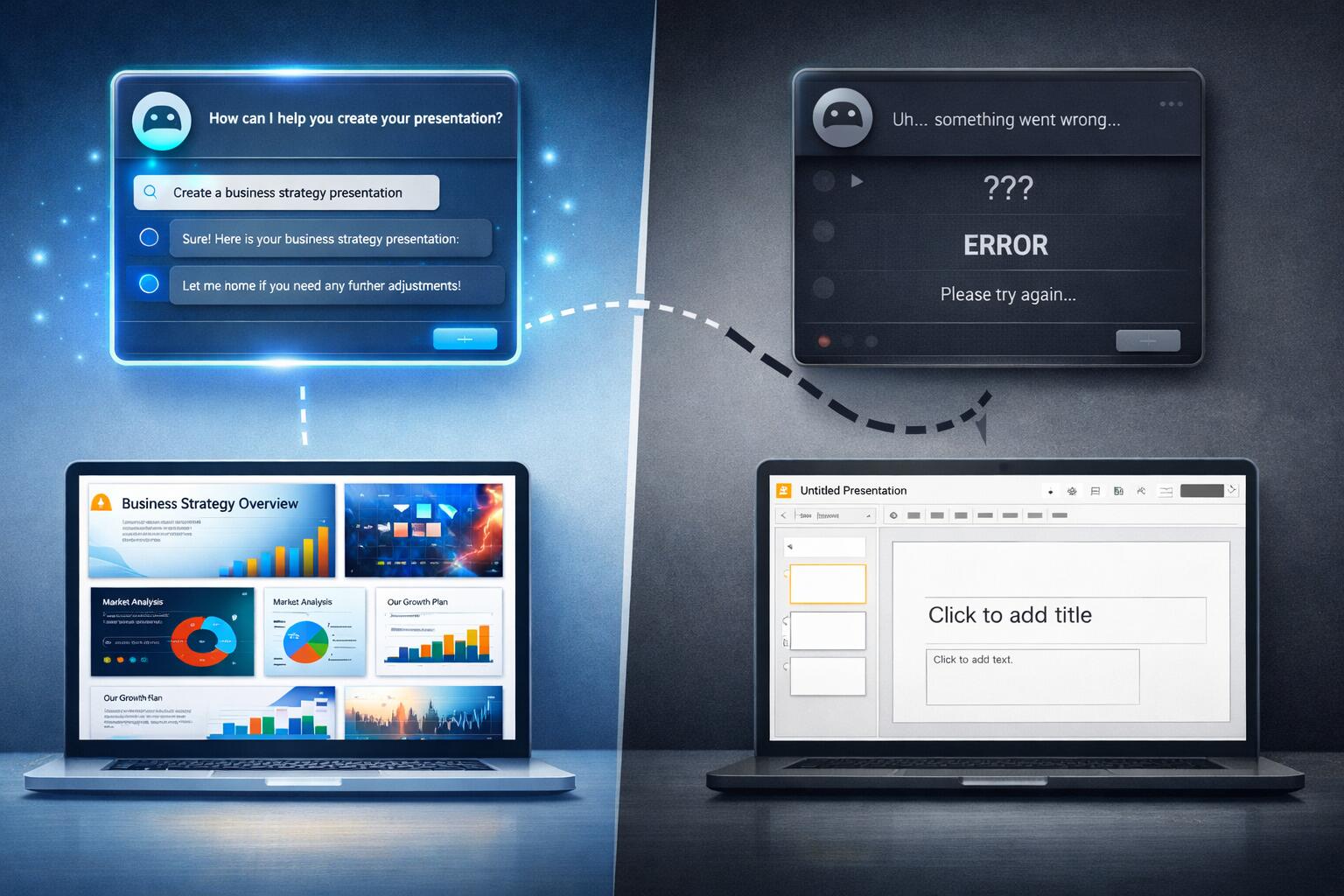

One of the main reasons teams don’t see performance benefits from AI is simple: they’re using it for the wrong things.

AI can make you faster on some tasks and slower on others. If the mix is wrong—if people lean on AI for complex design, deep debugging, and security-sensitive code while underusing it for docs, tests, and boilerplate—then overall you feel no gain or even a net loss. The tool gets blamed, but the issue is task fit.

For teams struggling to see AI benefits in their software engineering workflows, the first move is to get explicit about where AI helps and where it doesn’t, and to steer usage accordingly.

Where AI Usually Helps (Use It Here)

Documentation and comments: Generating READMEs, API docs, runbooks, and inline comments from code. Low risk, easy to verify, and the benefit (something where there was nothing, or an update where things were stale) is obvious.

Tests for existing code: Suggesting unit or integration tests for code that already works. Scoped, checkable (tests run), and you get a clear before/after (coverage, regression catch).

Boilerplate and repetitive code: CRUD endpoints, DTOs, config wiring, simple scripts. Boring and mechanical; AI is good at this and mistakes are easy to spot.

Explaining and summarizing: “What does this module do?” “Summarize this PR.” “Explain this error.” The output is for humans; you can correct it quickly. Saves senior time and helps onboarding.

Structured refactors: Renames, formatting, “add error handling to these N functions” when the pattern is clear. AI can do the first pass; you review and fix edge cases.

In these areas, the team can often see a direct win: less time on tedious work, faster onboarding, or more tests/docs with similar or less effort.

Where AI Usually Hurts (Use It Less or Not at All)

Architecture and design: System boundaries, data models, and technology choices depend on context, tradeoffs, and organizational constraints. AI doesn’t have that context and tends to produce generic or inconsistent suggestions. Using it here often means rework and confusion—slower, not faster.

Security-sensitive code: Auth, permissions, input validation, crypto. Mistakes are costly and subtle. AI is good at looking correct and bad at being correct in edge cases. Verification cost is high; one mistake can wipe out any gain.

Deep debugging: Root-cause analysis in a large codebase often requires understanding history, invariants, and interactions that aren’t in the immediate snippet. AI will guess; you’ll spend time checking. Often faster to reason through it yourself.

Novel or underspecified problems: When the problem is fuzzy or the solution isn’t a standard pattern, AI tends to produce plausible-but-wrong or overcomplicated code. You spend more time fixing than you would have spent writing.

High-context, legacy code: Systems with lots of tribal knowledge, odd conventions, or “don’t touch that” areas. AI will suggest changes that break assumptions you can’t easily document. Review and rollback cost can outweigh any generation speed.

When teams use AI heavily in these areas, they experience slowdowns, more rework, and more skepticism—and report “we don’t see benefits.”

A Simple Framework for Your Team

Make the split explicit so people don’t have to guess:

Green (use AI, expect speedup): Docs, comments, tests for existing code, boilerplate, explanations, simple refactors. Default: use AI for first draft; review and ship.

Yellow (use AI with care): Feature code in well-understood areas, non-security APIs, routine bug fixes. Use AI to suggest, but review thoroughly and keep verification time in mind. If you’re spending more time fixing than writing, treat it as red.

Red (don’t use AI, or use only for ideas): Architecture decisions, security-critical paths, deep debugging, legacy hotspots, anything novel or underspecified. Do it yourself or pair; use AI only for optional “what if” ideas, not as the implementation source.

Share this with the team and refine it based on your own experience (e.g. “we found AI is also slow for X, so we moved X to red”).

How This Helps Teams That Don’t See Benefits

Many struggling teams are using AI across the board. They get some wins (docs, tests) and a lot of pain (complex features, debugging). On balance it feels like no benefit.

When you narrow AI to green (and maybe careful yellow), you get:

- More visible wins – Time saved on docs and tests isn’t diluted by time lost on complex code.

- Less frustration – People stop fighting the tool on tasks where it’s a poor fit.

- Clearer expectations – “We use AI for this, not for that” is something you can measure and improve.

- A path to expand – Once the team sees benefit in green, you can experiment with moving a yellow task to green (e.g. “we’re now comfortable with AI for this kind of API”) instead of forcing AI everywhere.

One Practical Change

If you do only one thing: run a short retro. “In the last two weeks, where did AI clearly save time? Where did it waste time or add rework?” Aggregate the answers and update your green/yellow/red list. Then communicate: “We’re going to use AI more for X and less for Y.” For teams struggling to see benefits, that single change—picking the right tasks—often makes the difference between “AI doesn’t help” and “AI helps when we use it for the right things.”