Why AI Is Hurting Your Best Engineers Most

The productivity story on AI coding tools has a flattering headline: senior engineers realize nearly five times the productivity gains of junior engineers from AI tools. More experience means better prompts, better evaluation of output, better use of AI on the right tasks. The gap is real and it makes sense.

But there’s a hidden cost buried in that same data. The tasks senior engineers are being asked to spend their time on are changing—and not always in ways that use their strengths well. Increasingly, the work that lands on senior engineers’ plates in AI-augmented teams is validation, review, and debugging of AI-generated code—a category of work that is simultaneously less interesting, harder than it looks, and consuming time that used to go to architecture, design, and mentorship.

The Great Toil Shift: AI Didn't Remove Your Drudge Work, It Moved It

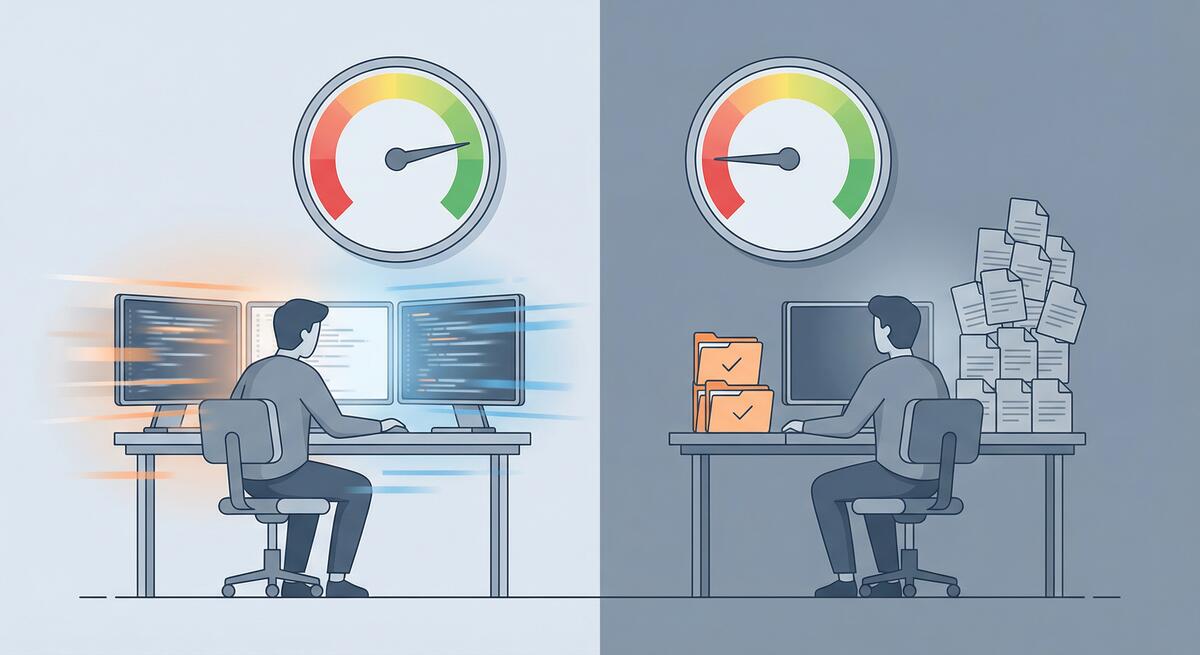

One of the clearest promises of AI coding tools was relief from developer toil: the repetitive, low-value work—debugging boilerplate, writing tests for obvious code, fixing the same style violations—that keeps engineers from doing the interesting parts of their jobs. The premise was simple: AI does the tedious parts, humans do the creative parts.

The data from 2026 tells a more nuanced story. According to Sonar’s analysis and Opsera’s 2026 AI Coding Impact Benchmark Report, the amount of time developers spend on toil hasn’t decreased meaningfully. It’s shifted. High AI users spend roughly the same 23–25% of their workweek on drudge work as low AI users—they’ve just changed what they’re doing with that time.

Cursor vs. Copilot in 2026: What Actually Matters for Your Team

By 2026 the AI coding tool war is a fixture of tech news. Cursor—the AI-native editor from a handful of MIT grads—has reached a $29.3B valuation and around $1B annualized revenue in under two years. GitHub Copilot has crossed 20 million users and sits inside most of the Fortune 100. The comparison pieces write themselves: Cursor vs. Copilot on features, price, workflow. But for teams that have adopted one or both and still don’t see clear performance benefits, the lesson from 2026 isn’t “pick the winning tool.” It’s that the tool is often the wrong place to look.

The METR Study One Year Later: When AI Actually Slows Developers

In early 2025, METR (Model Evaluation and Transparency Research) ran a randomized controlled trial that caught the industry off guard. Experienced open-source developers—people with years on mature, high-star repositories—were randomly assigned to complete real tasks either with AI tools (Cursor Pro with Claude) or without. The result: with AI, they took 19% longer to finish. Yet before the trial they expected AI to make them about 24% faster, and after it they believed they’d been about 20% faster. A 39-point gap between perception and reality.

When AI Slows You Down: Picking the Right Tasks

One of the main reasons teams don’t see performance benefits from AI is simple: they’re using it for the wrong things.

AI can make you faster on some tasks and slower on others. If the mix is wrong—if people lean on AI for complex design, deep debugging, and security-sensitive code while underusing it for docs, tests, and boilerplate—then overall you feel no gain or even a net loss. The tool gets blamed, but the issue is task fit.

Start Here: Three AI Workflows That Show Results in a Week

When a team has tried AI and concluded “we don’t see the benefit,” the worst move is to push harder on the same, vague usage. A better move is to pick a few concrete workflows where AI reliably helps, run them for a short time, and measure the outcome. That gives the team something tangible to point to—“this is where AI helped us.”

Here are three workflows that tend to show results within a week and are a good place to start for teams struggling to see performance benefits from AI in their software engineering workflows.

Measuring What Matters: Getting Real About AI ROI

When a team says they don’t see performance benefits from AI, the first question to ask isn’t “Are you using it enough?” It’s “How are you measuring benefit?”

A lot of organizations track adoption (who has a license, how often they use the tool) or activity (suggestions accepted, chats per day). Those numbers go up and everyone assumes AI is working. But cycle time hasn’t improved, quality hasn’t improved, and the team doesn’t feel faster. So you get a disconnect: the dashboard says success, the team says “we don’t see it.”

OpenClaw for Teams That Gave Up on AI

Lots of teams have been here: you tried ChatGPT, Copilot, or a similar assistant. You used it for coding, planning, and support. After a few months, the verdict was “meh”—maybe a bit faster on small tasks, but no real step change, and enough wrong answers and extra verification that it didn’t feel worth the hype. So you dialed back, or gave up on “AI” as a productivity lever.

If that’s you, the next step isn’t to try harder with the same tools. It’s to try a different kind of tool: one built to do a few concrete jobs in your actual environment, with access to your systems and a clear way to see that it’s helping. OpenClaw (and tools like it) can be that next step—especially for teams that are struggling to see any performance benefits from AI in their software engineering workflows.

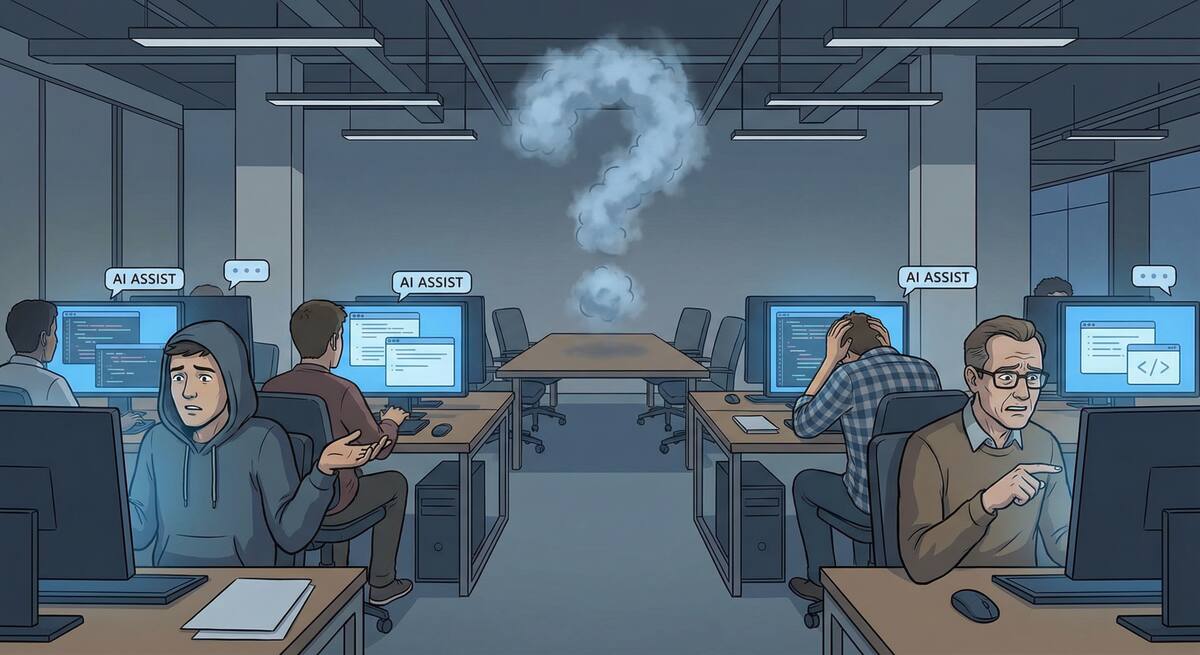

Why Your Team Isn't Seeing AI Benefits (And It's Not the Tools)

You rolled out AI coding tools. You got licenses, ran the demos, and encouraged the team to try them. Months later, the feedback is lukewarm: “We use it sometimes.” “It’s okay for small stuff.” “I’m not sure it’s actually faster.” Nobody’s seeing the dramatic productivity gains the vendor promised.

If this sounds familiar, you’re not alone. Research shows that while 84% of developers use or plan to use AI tools, only 55% find them highly effective—and trust in AI output has dropped sharply. Adoption doesn’t equal impact. The gap between “we have AI” and “AI is helping us ship better, faster” is where most teams get stuck.

The Documentation Problem AI Actually Solves

I’ve spent the past several weeks writing critically about AI tools—the productivity paradox, comprehension debt, burnout risks, vibe coding dangers. Those concerns are real and important.

But I want to end this series on a genuinely positive note, because there’s one area where AI tools deliver clear, consistent, unambiguous value for engineering teams: documentation.

Documentation is the unloved obligation of software development. Everyone agrees it’s important. Nobody wants to write it. The result is that most codebases are woefully underdocumented, and the documentation that does exist is often outdated, incomplete, or wrong.

The AI Burnout Paradox: When Productivity Tools Make Developers Miserable

Here’s an irony that nobody predicted: AI tools designed to make developers more productive are making some of them more miserable.

The promise was straightforward. AI handles the tedious parts of coding—boilerplate, repetitive patterns, documentation lookup—freeing developers to focus on the interesting, creative work. Less toil, more thinking. Less grinding, more innovating.

The reality is more complicated. Research shows that GenAI adoption is heightening burnout by increasing job demands rather than reducing them. Developers report cognitive overload, loss of flow state, rising performance expectations, and a subtle but persistent feeling that their work is being devalued.

OpenClaw for Engineering Teams: Beyond Chatbots

I wrote recently about using OpenClaw (formerly Moltbot) as an automated SDR for sales outreach. That post focused on a business use case, but since then I’ve been exploring what OpenClaw can do for engineering teams specifically—and the results have been more interesting than I expected.

OpenClaw has evolved significantly since its early days. With 173,000+ GitHub stars and a rebrand from Moltbot in late January 2026, it’s moved from a novelty to a genuine platform for local-first AI agents. The key differentiator from tools like ChatGPT or Claude isn’t the AI model—it’s the deep access to your local systems and the skill-based architecture that lets you build custom workflows.

Lessons from a Year of AI Tool Experiments: What Actually Worked

Over the past year, I’ve been experimenting extensively with AI tools—trying to understand what they’re actually good for, where they fall short, and how to use them effectively. I’ve written about several of these experiments: the meeting scheduling failures, the presentation generation disappointments, and most recently, setting up Moltbot as an SDR.

Looking back at all these experiments, patterns emerge. Some things consistently worked. Others consistently didn’t. And a few things surprised me in both directions.

The Case Against Daily Standups in 2026

I’ve been thinking about daily standups lately—specifically, whether they still make sense for engineering teams in 2026.

This isn’t a “standups are terrible” rant. I’ve run teams with effective standups and teams where standups were pure theater. The question isn’t whether standups are universally good or bad; it’s whether the standard daily standup format still fits how engineering teams work today.

My conclusion: for many teams, it doesn’t. Here’s why.

AI Code Review: The Hidden Bottleneck Nobody's Talking About

Here’s a problem that’s creeping up on engineering teams: AI tools are dramatically increasing the volume of code being produced, but they haven’t done anything to increase code review capacity. The bottleneck has shifted.

Where teams once spent the bulk of their time writing code, they now spend increasing time reviewing code—much of it AI-generated. And reviewing AI-generated code is harder than reviewing human-written code in ways that aren’t immediately obvious.

The AI Productivity Paradox: Why Experienced Developers Are Slowing Down

There’s something strange happening in software development right now, and I think we need to talk about it.

Recent research has surfaced a troubling finding: experienced developers working on complex systems are actually 19% slower when using AI coding tools—despite perceiving themselves as working faster. This isn’t a minor discrepancy. It’s a fundamental disconnect between how productive we feel and how productive we actually are.

As someone who’s been experimenting with AI tools extensively (and writing about the results), this finding resonates with my experience. Let me break down what’s happening and what it means for engineering teams.

Transforming Sales Outreach: Using Moltbot as Your AI-Powered SDR

If you’ve been following the AI space lately, you’ve probably heard about Moltbot (also known as OpenClaw)—the open-source AI assistant that skyrocketed to 69,000 GitHub stars in just one month. While most people are using it for personal productivity tasks, there’s a more intriguing use case worth exploring: setting up Moltbot as an automated Sales Development Representative (SDR) for companies.

This post explores how this approach could work, including the setup process, the potential benefits, and yes, the limitations you need to understand before diving in.

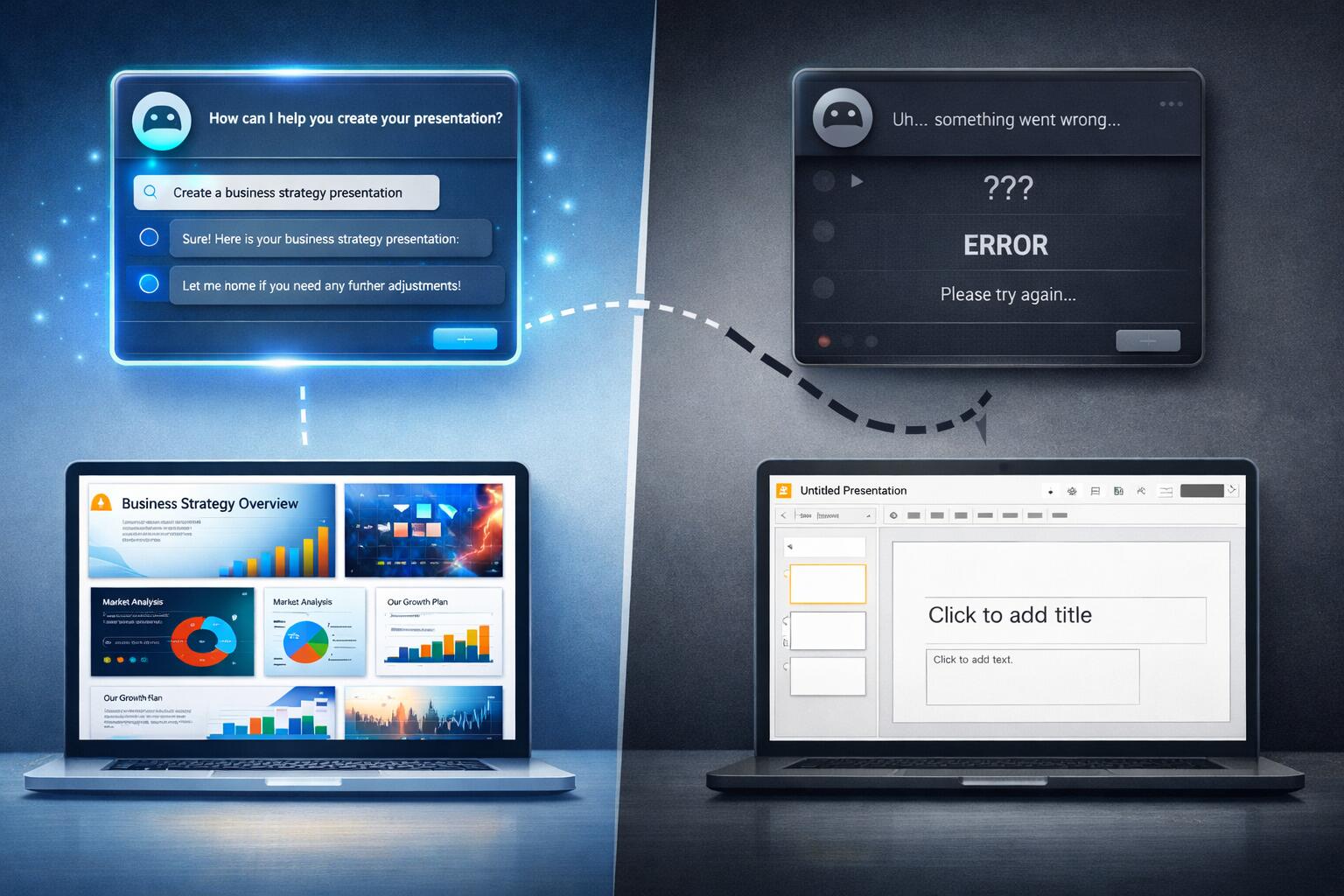

AI Agents and Google Slides: When Promise Meets Reality

I’ve been experimenting with AI agents to help create Google Slides presentations, and I’ve discovered something interesting: they’re great at the planning and ideation phase, but they completely fall apart when it comes to actually delivering on their promises.

The Promising Start

I’ve had genuinely great success using ChatGPT to help with presentation planning. I’ll start a conversation about my presentation topic, share the core material I want to cover, and ChatGPT does an excellent job of:

When AI Assistants Fail: The Meeting Scheduling Reality Check

I recently tried to use AI assistants to solve what should be a straightforward problem: scheduling a meeting with three other people at my office. We’re all Google Workspace users, so I figured this would be a perfect use case for AI—especially given all the hype about AI assistants being able to handle calendar management and scheduling.

Spoiler alert: both ChatGPT and Gemini failed spectacularly.

The ChatGPT Experience

I started with ChatGPT, thinking it would be able to help coordinate schedules. My request was simple: find a time that works for me and three colleagues for a meeting.

Building a Second Brain: A Review on Knowledge Management

I’ve been drowning in information for years. I’m constantly consuming content—technical documentation, team meeting notes, one-on-one conversations, architecture decisions, industry articles, conference talks, and the list goes on. The problem isn’t the volume; it’s that I’ve never had a good system for capturing, organizing, and actually using all of this knowledge when I need it.

That’s why Tiago Forte’s “Building a Second Brain” caught my attention. The premise is simple but powerful: create a system outside your head to store and retrieve information, so your actual brain can focus on thinking and creating rather than remembering.

Categories

Tags

- 100pounds

- 2020

- Acp

- Adblock-Plus

- Adoption

- Agentic

- Agents

- Agile

- Ai

- Ai-Agents

- Amazon

- Apache

- Apple

- Architecture

- Audit

- Authorize-Net

- Automation

- Azure

- Benchmarks

- Bing

- Bingbot

- Blog

- Book-Reviews

- Books

- Burnout

- Business-Tools

- Cache

- Career

- Chatgpt

- Chrome

- Cicd

- Claude

- Cloudflare

- Code-Quality

- Code-Review

- Codex

- Coding

- Coding-Agents

- Compass

- Conversion

- Copilot

- Css

- Culture

- Cursor

- Cve

- Deployment

- Design-Patterns

- Developer-Experience

- Developer-Tools

- Developer-Velocity

- Development

- Disqus

- Docker

- Documentation

- Enterprise

- Fine-Tuning

- Firebase

- Firefox

- Full-Stack

- Future-of-Work

- Gemini

- Genesis-Framework

- Getting-Started

- Ghost-Tag

- Github

- Github-Copilot

- Githubpages

- Google-Slides

- Google-Workspace

- Governance

- Helper

- Hiring

- How-Not-To

- How-To

- Html

- Hugo

- Ide

- Infrastructure

- Integration

- Internet-Explorer

- Interviews

- Iphone-6

- Javascript

- Jekyll

- Jetbrains

- Jquery

- Junior-Developers

- Knowledge-Management

- Laravel

- Leadership

- Legal

- Lessons-Learned

- Llms

- Local-First

- MacOS

- Magento

- Magento 2

- Magento2

- Management

- Mcp

- Meetings

- Mental-Health

- Mentorship

- Metr

- Metrics

- Microsoft

- Models

- Moltbot

- Multi-Agent

- Mysql

- Netlify

- Nginx

- Nist

- Nodejs

- Open-Source

- Openai

- Openclaw

- Orchestration

- OSX

- Parallelization

- Performance

- Personal

- Php

- Policy

- Presentations

- Process

- Product-Development

- Productivity

- Programming

- Prompt-Injection

- Protocols

- Pull-Requests

- Python

- Quality

- Rant

- Remote-Work

- Replit

- Research

- Responsive-Web-Design

- Retrospective

- Roi

- Safari

- Sales

- Scrum

- Security

- Senior-Engineers

- Series

- Sitecatalyst

- Sota

- Sql

- Sql-Server

- Standards

- Tasks

- Teams

- Technical-Debt

- Testing

- Tier-Pricing

- Tips

- Tmobile

- Tools

- Trust

- Unittest

- Ux

- Validation

- Varnish

- Vercel

- Verification

- Vibe-Coding

- Visual-Studio

- Vs-Code

- Web-Development

- Windows-7

- Windows-Vista

- Woocommerce

- Wordpress

- Workflow

- Workflows

- Xml