GPT-5.4 mini in GitHub Copilot: When Smaller Models Are the Right Product Move

GitHub announced GPT-5.4 mini as generally available for GitHub Copilot in mid-March 2026, positioning it as a faster option with stronger codebase exploration characteristics.

In a market obsessed with flagship models and leaderboard scores, a GA mini model is easy to dismiss. It is actually one of the more realistic product moves in AI coding.

Not Every Task Needs the Biggest Model

Developer workflows are not one uniform difficulty distribution. A huge share of daily work is:

GitHub Copilot Goes Fully Agentic in JetBrains: Hooks, MCP, and Instruction Files

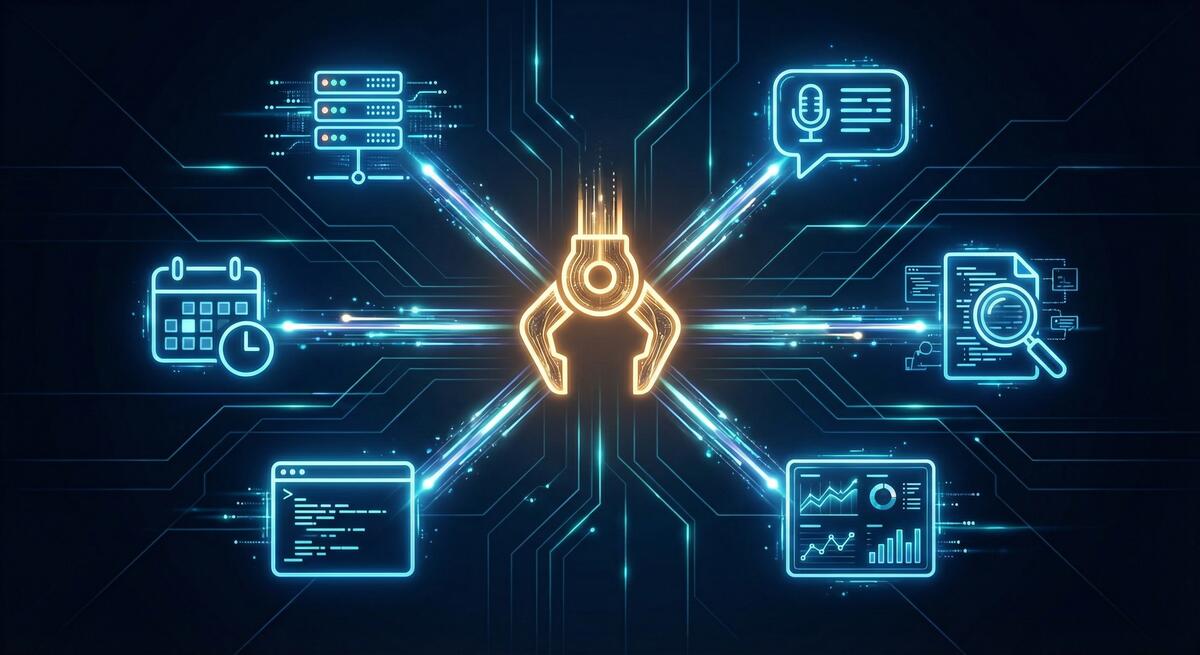

In mid-March 2026, GitHub promoted a major bundle of Copilot agentic capabilities to general availability in JetBrains IDEs, moving key features out of preview for day-to-day use.

The changelog reads like a checklist of what “serious agentic IDE support” now means:

- Custom agents and sub-agents, plus a planning-oriented agent workflow for breaking down complex work

- Agent hooks in public preview, so teams can run custom commands at defined points in an agent session

- MCP auto-approve at server and tool granularity to reduce approval friction when policies allow it

- Automatic discovery of

AGENTS.mdandCLAUDE.mdinstruction files during agent sessions - Auto model selection generally available, with Copilot choosing models based on availability and performance

- An extended reasoning experience for models that expose more explicit thinking, such as Codex-class workflows

Why JetBrains Users Should Care

JetBrains IDEs are where many teams live for deep language support, refactoring, and navigation. Agent features only matter if they meet developers in that workflow, not as a separate tool they resent switching to.

Replit Agent 4 and the Parallel Build Future

Replit’s Agent 4 launch, framed around creativity and speed, includes a product shape that is becoming common in 2026: parallel agent execution across different parts of an application, such as authentication, database work, and UI, with the intent that those streams merge back into a single product.

That is not just a marketing story. It is an architectural bet about how software gets built when generation is cheap and coordination is expensive.

Google's Antigravity Agent in AI Studio: Full-Stack from Prompt to App

Google’s AI Studio update adding Antigravity, described in coverage as a full-stack coding agent, is part of the same wave as other vendors pushing from “generate a file” toward “generate a working application stack.”

Reports highlight several practical capabilities: turning prompts into more complete web apps, built-in Firebase integration for data and authentication, expanded framework support (including common React/Next-style stacks), session persistence across devices, and tooling to manage secrets and external service credentials more safely than copy-pasting keys into chat.

Vercel's Plugin for Coding Agents: Deployment Knowledge as Infrastructure

Vercel’s plugin for coding agents is one of those releases that sounds incremental until you notice what it is really doing: it turns deployment and edge-platform expertise into structured, reusable context that agents can invoke reliably.

According to Vercel’s announcement, the plugin bundles broad platform coverage (on the order of 47+ skills) and includes specialist agents aimed at deployment optimization scenarios, so agents are not guessing their way through framework-specific hosting details every time.

Trace Every Copilot Agent Commit Back to Its Session Logs

Agent-generated commits used to arrive like any other push: you saw the diff, but not the reasoning, tool calls, or missteps that produced it. In March 2026, GitHub tightened that story.

Copilot coding agent commits can now include an Agent-Logs-Url trailer that points reviewers back to the full session logs for that change. GitHub also highlighted live monitoring of Copilot coding agent logs through integrations such as Raycast.

This is not a flashy model upgrade. It is infrastructure for accountability.

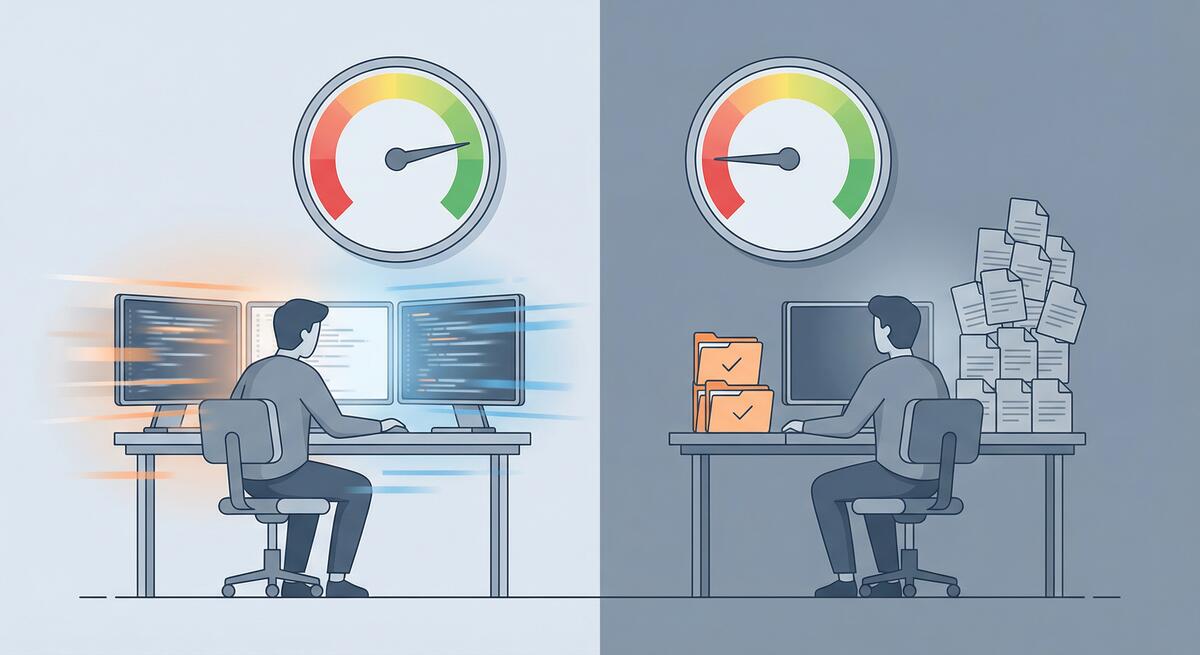

GitHub Says Copilot's Coding Agent Starts Work 50% Faster. Here's Why That Changes the Math

In a March 2026 changelog update, GitHub reported that the Copilot coding agent starts work roughly 50% faster, with optimizations to the cloud-based development environments agents use to spin up and begin executing on a repository.

That sounds like a performance tweak. It is also a shift in how teams should think about agent economics.

Cold Start Was a Hidden Tax

For any agent that runs in an isolated or remote environment, time-to-first-action is not just latency. It is friction that shapes behavior:

The MCP Security Problem Is Really a Least-Privilege Problem

The most important security story around agent infrastructure right now is not a single CVE. It is the growing realization that MCP security is immature by default.

As of March 2026, reporting around MCP security points to more than 30 CVEs, thousands of publicly reachable servers with weak or no authentication, and a still-evolving roadmap for making the protocol more production-ready. One especially concerning figure: roughly 36% of observed MCP servers reportedly accept connections without meaningful authentication.

Why NIST's AI Agent Standards Initiative Matters Right Now

One of the most consequential AI stories this month is not a product launch. It is the NIST AI Agent Standards Initiative.

NIST launched the effort through its Center for AI Standards and Innovation to focus on security, interoperability, and identity for AI agents. The initiative is structured around three pillars: industry-led standards development, open protocol support, and security research. It already has concrete deadlines attached, including a March security request for input and an April identity concept paper.

Gemini CLI Conductor Turns Review into a Structured Report

Google’s automated review update for Gemini CLI Conductor is worth paying attention to for a simple reason: it treats AI review as a structured verification step, not as another free-form chat.

Conductor’s new review mode evaluates generated code across multiple explicit dimensions:

- code quality

- plan compliance

- style and guideline adherence

- test validation

- security review

The output is a categorized report by severity, with exact file references and a path to launch follow-up work. That is an important product choice.

Claude Code Review and the New Economics of Verification

Anthropic’s new Claude Code Review feature is one of the clearest signs yet that the economics of AI development are shifting from generation toward verification.

The March launch is aimed at Teams and Enterprise customers and uses multiple specialized review agents to examine pull requests in parallel, verify findings, and rank issues by severity. Anthropic says reviews typically take around 20 minutes, cost roughly $15-$25 per PR, and increased substantive feedback from 16% of PRs to 54% internally. For large pull requests over 1,000 lines, 84% reportedly received findings.

JetBrains Air and the Case for the Agent-Native IDE

JetBrains Air, launched in public preview in early March, is one of the more interesting answers yet to a question the AI tooling market keeps circling: do we really want agents bolted onto traditional editors, or do we eventually need environments designed around them from the start?

Air is betting on the second path.

Why Air Is Interesting

Most current AI coding tools still inherit the shape of the pre-AI IDE. There is a primary editor, maybe a chat pane, maybe an agent sidebar, and the user is still clearly the central operator of a mostly traditional workspace.

Cursor Automations and the Shift from Prompting to Policy

One of the most current product shifts in AI development tooling is Cursor Automations, which turns coding agents into event-driven workflows instead of one-off assistants. The feature can trigger work from commits, Slack messages, timers, and operational events, then route the agent through review, checks, and deployment-style steps with humans only stepping in at key points.

That may sound like just another convenience layer. It is not. It reflects a deeper change in how teams are thinking about AI tooling.

VS Code's New Agent Features Show What 'Practical' Actually Means

One of the better AI tooling posts of the month came from Microsoft itself: “Making agents practical for real-world development.” That framing is useful because it captures what the market is moving toward. The interesting releases are no longer just about whether an agent can generate code. They are about whether the agent can survive contact with a messy, real workflow.

VS Code 1.110 is a good example of that shift. The March release adds native browser control for agents, better session memory, context compaction for long conversations, installable agent extensions, and a real-time Agent Debug panel. None of those features are flashy in isolation. Together, they show what “practical” now means in agentic development.

Why AI Testing and Validation Tools Are Becoming the Real Leverage Point

One of the clearest signs that the AI coding market is maturing is that some of the most interesting product launches are no longer about generating code. They are about proving the generated code is usable.

TestSprite 2.1, released in early March 2026, is a good example. The company says nearly 100,000 development and QA teams now use the platform to validate AI-generated code, and the latest release claims a 4-5x faster testing engine, visual test editing, automatic pull request testing, and an especially telling benchmark: AI-generated code initially passed only 42% of comprehensive test cases, but jumped to 93% after one iteration with TestSprite’s testing agent.

The Latest AI Code Security Benchmark Is Useful for One Reason

The newest AI code security benchmark is worth reading, but probably not for the reason most people will share it.

The headline result is easy to repeat: across 534 generated code samples from six leading models, 25.1% contained confirmed vulnerabilities after scanning and manual validation. GPT-5.2 performed best at 19.1%. Claude Opus 4.6, DeepSeek V3, and Llama 4 Maverick tied for the worst result at 29.2%. The most common issues were SSRF, injection weaknesses, and security misconfiguration.

OpenAI Symphony and the New Bottleneck: Orchestrating Agents Well

OpenAI’s new Symphony project is one of the most revealing open-source releases in the current coding-agent cycle.

At the surface level, it is an orchestration framework for autonomous software development runs. It connects to issue trackers, spins up isolated implementation runs, coordinates agents, collects proof of work, and helps land changes once they are verified. It is built in Elixir on the BEAM runtime and is clearly optimized for concurrency and fault tolerance.

GitHub Copilot's Real Upgrade Is Choice, Not Just More Models

On February 26, GitHub expanded access to Claude and Codex for Copilot Business and Copilot Pro users, following the earlier February rollout to Pro+ and Enterprise. On paper, this is a pricing and availability update. In practice, it is a product-definition change.

GitHub is turning Copilot from a branded assistant into a control surface for multiple coding agents.

Why This Is Bigger Than It Sounds

For a long time, the framing around Copilot was simple: GitHub had an assistant, and the main question was how good that assistant was. With Claude and Codex available directly inside GitHub workflows, the framing changes.

Visual Studio's Built-In Azure MCP Server Is a Bigger Deal Than It Looks

Microsoft quietly made one of the strongest enterprise bets in the current AI tooling cycle: Azure MCP Server is now built into Visual Studio 2026.

For teams already living in Microsoft’s ecosystem, this is not just another integration announcement. It is a signal that agentic workflows are moving from optional plugin territory into the default shape of mainstream enterprise development.

Why This Matters

MCP, or Model Context Protocol, is becoming the standard way AI agents connect to tools, systems, and data sources. We already knew that mattered in principle. What changes here is that Microsoft has now embedded an MCP-backed cloud workflow directly inside a flagship IDE.

Cursor in JetBrains and the End of IDE Lock-In

One of the quietest but most important developer-tooling stories of March 2026 is that Cursor is now available directly inside JetBrains IDEs through the Agent Client Protocol, or ACP, registry.

At first glance, this looks like a convenience feature. Cursor users can keep their preferred agent while staying in IntelliJ IDEA, PyCharm, or WebStorm. JetBrains users get access to a popular agentic workflow without switching editors. Nice, but not transformative.

Codex Security and the Rise of AI Reviewing AI

The next big shift in AI-assisted software development is not more code generation. It is AI for verification.

OpenAI’s new Codex Security research preview, announced in early March 2026, is a good signal of where the market is going. The product scans repositories commit by commit, builds repository-specific threat models, validates findings in isolated environments, and ranks issues with proposed fixes. OpenAI says early adopters used it to detect more than 11,000 critical and high-severity vulnerabilities while cutting false positives by more than 50%.

Why AI Is Hurting Your Best Engineers Most

The productivity story on AI coding tools has a flattering headline: senior engineers realize nearly five times the productivity gains of junior engineers from AI tools. More experience means better prompts, better evaluation of output, better use of AI on the right tasks. The gap is real and it makes sense.

But there’s a hidden cost buried in that same data. The tasks senior engineers are being asked to spend their time on are changing—and not always in ways that use their strengths well. Increasingly, the work that lands on senior engineers’ plates in AI-augmented teams is validation, review, and debugging of AI-generated code—a category of work that is simultaneously less interesting, harder than it looks, and consuming time that used to go to architecture, design, and mentorship.

The OpenAI Codex App and What Multi-Agent Development Actually Looks Like

In February 2026, OpenAI shipped a standalone Codex app. The headline is straightforward: it lets you manage multiple AI coding agents across projects, with parallel task execution, persistent context, and built-in git tooling. It’s currently available on macOS for paid ChatGPT plan subscribers.

But the headline undersells what’s actually happening. The Codex app isn’t just a better chat interface for code—it’s an early, concrete version of what multi-agent software development looks like when it arrives as a consumer product. Understanding what it actually does (and doesn’t do) matters for any team thinking seriously about AI-assisted development in 2026.

MCP: The Integration Standard That Quietly Became Mandatory

If you were paying attention to AI tooling in late 2024, you heard about the Model Context Protocol (MCP). If you weren’t, you may have missed the quiet transition from “Anthropic’s new open standard” to “the de facto integration layer for AI agents.” By early 2026, MCP has 70+ client applications, 10,000+ active servers, 97+ million monthly SDK downloads, and—in December 2025—moved to governance under the Agentic AI Foundation under the Linux Foundation. Anthropic, OpenAI, Google, Microsoft, and Amazon have all adopted it.

The Great Toil Shift: AI Didn't Remove Your Drudge Work, It Moved It

One of the clearest promises of AI coding tools was relief from developer toil: the repetitive, low-value work—debugging boilerplate, writing tests for obvious code, fixing the same style violations—that keeps engineers from doing the interesting parts of their jobs. The premise was simple: AI does the tedious parts, humans do the creative parts.

The data from 2026 tells a more nuanced story. According to Sonar’s analysis and Opsera’s 2026 AI Coding Impact Benchmark Report, the amount of time developers spend on toil hasn’t decreased meaningfully. It’s shifted. High AI users spend roughly the same 23–25% of their workweek on drudge work as low AI users—they’ve just changed what they’re doing with that time.

GitHub's Agent Control Plane: What Enterprise AI Governance Actually Looks Like

On February 26, 2026, GitHub made its Enterprise AI Controls and agent control plane generally available. The timing is notable: it came in the same week that Claude and Codex became available for Copilot Business and Pro users, and as GitHub Enterprise Server 3.20 hit release candidate. The GA isn’t a coincidence—it reflects an industry that has moved from “should we let agents into our codebase?” to “how do we govern agents that are already in our codebase?”

The PR Tsunami: What AI Code Volume Is Doing to Your Review Process

AI coding tools delivered on their core promise: developers write less, ship more. Teams using AI complete 21% more tasks. PR volume has exploded—some teams that previously handled 10–15 pull requests per week are now seeing 50–100. In a narrow sense, that’s a win.

But there’s a tax on that win that most engineering leaders aren’t accounting for: AI-generated PRs wait 4.6x longer for review than human-written code, despite actually being reviewed 2x faster once someone picks them up. The bottleneck isn’t coding anymore. It’s review capacity, and it’s getting worse as AI generation accelerates.

Vibe Coding Won. Now What?

Vibe coding went from a niche provocation to the dominant paradigm of software development in less than 18 months. Collins English Dictionary named it 2025 Word of the Year. OpenAI co-founder Andrej Karpathy coined the term in February 2025; by early 2026, approximately 92% of US developers use AI coding tools daily, and 46% of all new code is AI-generated. The adoption battle is over—vibe coding won.

So why does it feel like the victory lap is getting complicated?

SERA and the Case for Open-Source Coding Agents That Know Your Repo

If your team has tried Cursor, Copilot, or other AI coding tools and found them underwhelming on your codebase—wrong conventions, missing context, generic suggestions—you’re running into a fundamental limit: those models are trained and optimized for the average repo, not yours. In early 2026, AI2 (Allen Institute for AI) released SERA (Soft-Verified Efficient Repository Agents), an open-source family of coding agents built for something different: specialization to your repository through fine-tuning, at a cost that makes it realistic for more teams.

Cursor vs. Copilot in 2026: What Actually Matters for Your Team

By 2026 the AI coding tool war is a fixture of tech news. Cursor—the AI-native editor from a handful of MIT grads—has reached a $29.3B valuation and around $1B annualized revenue in under two years. GitHub Copilot has crossed 20 million users and sits inside most of the Fortune 100. The comparison pieces write themselves: Cursor vs. Copilot on features, price, workflow. But for teams that have adopted one or both and still don’t see clear performance benefits, the lesson from 2026 isn’t “pick the winning tool.” It’s that the tool is often the wrong place to look.

Prompt Injection Is Coming for Your Coding Agent

In early 2026, a critical vulnerability in Anthropic’s Claude Code made the rounds: CVE-2026-24887, which let an attacker bypass the user-approval prompt and execute arbitrary commands via prompt injection. Around the same time, researchers demonstrated prompt-injection-to-RCE chains in GitHub Actions—an external PR could trigger Claude Code in a workflow and, with a malicious payload in the PR title, achieve code execution with workflow privileges. Real incidents have shown agents exfiltrating SSH keys and credentials from hidden instructions in docs or comments. NIST has called prompt injection “generative AI’s greatest security flaw,” and it’s #1 on the OWASP LLM Top 10. If your team is rolling out AI coding assistants or agentic workflows, this isn’t theoretical. It’s the threat model you need to plan for.

Why Mandating AI Tools Backfires: Lessons from Amazon and Spotify

Two stories dominated the AI-and-work conversation in early 2026. Amazon told its engineers that 80% had to use AI for coding at least weekly—and that the approved tool was Kiro, Amazon’s in-house assistant, with “no plan to support additional third-party AI development tools.” Around the same time, Spotify’s CEO said the company’s best engineers hadn’t written code by hand since December; they generate code with AI and supervise it. Both were framed as the future. Both also illustrate why mandating AI tools is a bad way to get real performance benefits, especially for teams that are already skeptical or struggling to see gains.

OpenClaw in 2026: Security Reality Check and Where It Still Shines

OpenClaw (the project formerly known as Moltbot and Clawdbot) had a wild start to 2026: explosive growth, a rebrand after Anthropic’s trademark request, and adoption from Silicon Valley to major Chinese tech firms. By February it had sailed past 180,000 GitHub stars and drawn millions of visitors. Then the other shoe dropped. Security researchers disclosed critical issues—including CVE-2026-25253 and the ClawHavoc campaign, with hundreds of malicious skills and thousands of exposed instances. The gap between hype and reality became impossible to ignore.

GitHub Agentic Workflows Are Here: What They Change (and What They Don't)

In February 2026, GitHub launched Agentic Workflows in technical preview—automation that uses AI to run repository tasks from natural-language instructions instead of only static YAML. It’s part of a broader idea GitHub calls “Continuous AI”: the agentic evolution of continuous integration, where judgment-heavy work (triage, review, docs, CI debugging) can be handled by coding agents that understand context and intent.

If you’re weighing whether to try them, it helps to be clear on what they are, what they’re good for, and what stays the same.

The METR Study One Year Later: When AI Actually Slows Developers

In early 2025, METR (Model Evaluation and Transparency Research) ran a randomized controlled trial that caught the industry off guard. Experienced open-source developers—people with years on mature, high-star repositories—were randomly assigned to complete real tasks either with AI tools (Cursor Pro with Claude) or without. The result: with AI, they took 19% longer to finish. Yet before the trial they expected AI to make them about 24% faster, and after it they believed they’d been about 20% faster. A 39-point gap between perception and reality.

Getting Your Team Unstuck: A Manager's Guide to AI Adoption

You’ve got AI tools in place. You’ve encouraged the team to use them. But the feedback is lukewarm or negative: “We tried it.” “It’s not really faster.” “We don’t see the benefit.” As a manager, you’re stuck between leadership expecting ROI and a team that doesn’t feel it.

The way out isn’t to push harder or to give up. It’s to change how you’re leading the adoption: create safety to experiment, narrow the focus so wins are visible, and align incentives so that “seeing benefits” is something the team can actually achieve. This guide is for engineering managers whose teams are struggling to see any performance benefits from AI in their software engineering workflows—and who want to turn that around.

When AI Slows You Down: Picking the Right Tasks

One of the main reasons teams don’t see performance benefits from AI is simple: they’re using it for the wrong things.

AI can make you faster on some tasks and slower on others. If the mix is wrong—if people lean on AI for complex design, deep debugging, and security-sensitive code while underusing it for docs, tests, and boilerplate—then overall you feel no gain or even a net loss. The tool gets blamed, but the issue is task fit.

Start Here: Three AI Workflows That Show Results in a Week

When a team has tried AI and concluded “we don’t see the benefit,” the worst move is to push harder on the same, vague usage. A better move is to pick a few concrete workflows where AI reliably helps, run them for a short time, and measure the outcome. That gives the team something tangible to point to—“this is where AI helped us.”

Here are three workflows that tend to show results within a week and are a good place to start for teams struggling to see performance benefits from AI in their software engineering workflows.

The Trust Collapse: Why 84% Use AI But Only 33% Trust It

Usage of AI coding tools is at an all-time high: the vast majority of developers use or plan to use them. Trust in AI output, meanwhile, has fallen. In recent surveys, only about a third of developers say they trust AI output, with a tiny fraction “highly” trusting it—and experienced developers are the most skeptical.

That gap—high adoption, low trust—explains a lot about why teams “don’t see benefits.” When you don’t trust the output, you verify everything. Verification eats the time AI saves, so net productivity is flat or negative. Or you use AI only for low-stakes work and conclude it’s “not for real code.” Either way, the team doesn’t experience AI as a performance win.

Measuring What Matters: Getting Real About AI ROI

When a team says they don’t see performance benefits from AI, the first question to ask isn’t “Are you using it enough?” It’s “How are you measuring benefit?”

A lot of organizations track adoption (who has a license, how often they use the tool) or activity (suggestions accepted, chats per day). Those numbers go up and everyone assumes AI is working. But cycle time hasn’t improved, quality hasn’t improved, and the team doesn’t feel faster. So you get a disconnect: the dashboard says success, the team says “we don’t see it.”

OpenClaw for Teams That Gave Up on AI

Lots of teams have been here: you tried ChatGPT, Copilot, or a similar assistant. You used it for coding, planning, and support. After a few months, the verdict was “meh”—maybe a bit faster on small tasks, but no real step change, and enough wrong answers and extra verification that it didn’t feel worth the hype. So you dialed back, or gave up on “AI” as a productivity lever.

If that’s you, the next step isn’t to try harder with the same tools. It’s to try a different kind of tool: one built to do a few concrete jobs in your actual environment, with access to your systems and a clear way to see that it’s helping. OpenClaw (and tools like it) can be that next step—especially for teams that are struggling to see any performance benefits from AI in their software engineering workflows.

Why Your Team Isn't Seeing AI Benefits (And It's Not the Tools)

You rolled out AI coding tools. You got licenses, ran the demos, and encouraged the team to try them. Months later, the feedback is lukewarm: “We use it sometimes.” “It’s okay for small stuff.” “I’m not sure it’s actually faster.” Nobody’s seeing the dramatic productivity gains the vendor promised.

If this sounds familiar, you’re not alone. Research shows that while 84% of developers use or plan to use AI tools, only 55% find them highly effective—and trust in AI output has dropped sharply. Adoption doesn’t equal impact. The gap between “we have AI” and “AI is helping us ship better, faster” is where most teams get stuck.

The Documentation Problem AI Actually Solves

I’ve spent the past several weeks writing critically about AI tools—the productivity paradox, comprehension debt, burnout risks, vibe coding dangers. Those concerns are real and important.

But I want to end this series on a genuinely positive note, because there’s one area where AI tools deliver clear, consistent, unambiguous value for engineering teams: documentation.

Documentation is the unloved obligation of software development. Everyone agrees it’s important. Nobody wants to write it. The result is that most codebases are woefully underdocumented, and the documentation that does exist is often outdated, incomplete, or wrong.

Your AI-Generated Codebase Is a Liability

If a quarter of Y Combinator startups have codebases that are over 95% AI-generated, we should probably talk about what that means when those companies get acquired, audited, or sued.

AI-generated code looks clean. It follows conventions. It passes linting. It often has reasonable test coverage. By most surface-level metrics, it appears to be high-quality software.

But underneath the polished exterior, AI-generated codebases carry risks that traditional codebases don’t. Security vulnerabilities that look correct. Intellectual property questions that don’t have clear answers. Structural problems that emerge only under stress. Dependency chains that nobody consciously chose.

Will Junior Developers Survive the AI Era?

Every few months, I see another hot take claiming that junior developer roles are dead. AI can write code faster than entry-level developers, the argument goes, so why would companies hire someone who’s slower and less reliable than Copilot?

It’s a scary narrative if you’re early in your career. It’s also wrong—but not entirely wrong, which makes it worth examining carefully.

Junior developers aren’t becoming obsolete. But the path into the profession is changing, and both new developers and the leaders who hire them need to understand how.

The AI Burnout Paradox: When Productivity Tools Make Developers Miserable

Here’s an irony that nobody predicted: AI tools designed to make developers more productive are making some of them more miserable.

The promise was straightforward. AI handles the tedious parts of coding—boilerplate, repetitive patterns, documentation lookup—freeing developers to focus on the interesting, creative work. Less toil, more thinking. Less grinding, more innovating.

The reality is more complicated. Research shows that GenAI adoption is heightening burnout by increasing job demands rather than reducing them. Developers report cognitive overload, loss of flow state, rising performance expectations, and a subtle but persistent feeling that their work is being devalued.

Comprehension Debt: When Your Team Can't Explain Its Own Code

Technical debt is a concept every engineering leader understands. You take a shortcut now, knowing you’ll need to come back and fix it later. The debt is visible: you can point to the code, explain what’s wrong with it, and estimate the cost of fixing it.

AI-generated code is introducing something different—and arguably worse. Researchers have started calling it “comprehension debt”: shipping code that works but that nobody on your team can fully explain.

Vibe Coding: The Most Dangerous Idea in Software Development

Andrej Karpathy—former director of AI at Tesla and OpenAI co-founder—coined a term last year that’s become the most divisive concept in software development: “vibe coding.”

His description was disarmingly casual: an approach “where you fully give in to the vibes, embrace exponentials, and forget that the code even exists.” In practice, it means letting AI tools take the lead on implementation while you focus on describing what you want rather than how to build it. Accept the suggestions, trust the output, don’t overthink the details.

OpenClaw for Engineering Teams: Beyond Chatbots

I wrote recently about using OpenClaw (formerly Moltbot) as an automated SDR for sales outreach. That post focused on a business use case, but since then I’ve been exploring what OpenClaw can do for engineering teams specifically—and the results have been more interesting than I expected.

OpenClaw has evolved significantly since its early days. With 173,000+ GitHub stars and a rebrand from Moltbot in late January 2026, it’s moved from a novelty to a genuine platform for local-first AI agents. The key differentiator from tools like ChatGPT or Claude isn’t the AI model—it’s the deep access to your local systems and the skill-based architecture that lets you build custom workflows.

Lessons from a Year of AI Tool Experiments: What Actually Worked

Over the past year, I’ve been experimenting extensively with AI tools—trying to understand what they’re actually good for, where they fall short, and how to use them effectively. I’ve written about several of these experiments: the meeting scheduling failures, the presentation generation disappointments, and most recently, setting up Moltbot as an SDR.

Looking back at all these experiments, patterns emerge. Some things consistently worked. Others consistently didn’t. And a few things surprised me in both directions.

AI Code Review: The Hidden Bottleneck Nobody's Talking About

Here’s a problem that’s creeping up on engineering teams: AI tools are dramatically increasing the volume of code being produced, but they haven’t done anything to increase code review capacity. The bottleneck has shifted.

Where teams once spent the bulk of their time writing code, they now spend increasing time reviewing code—much of it AI-generated. And reviewing AI-generated code is harder than reviewing human-written code in ways that aren’t immediately obvious.

From Code Writer to AI Orchestrator: The Changing Developer Role

There’s a narrative circulating in tech circles: developers are evolving from “code writers” to “AI orchestrators.” The story goes that instead of typing code ourselves, we’ll direct AI agents that write code for us. Our job becomes coordination, review, and high-level direction rather than implementation.

It’s a compelling vision. It’s also significantly oversimplified.

Research shows that developers can currently “fully delegate” only 0-20% of tasks to AI. That’s not nothing, but it’s far from the wholesale transformation some predict. The reality of how developer roles are changing is more nuanced—and more interesting—than the hype suggests.

GitHub Copilot Agent Mode: First Impressions and Practical Limits

GitHub Copilot’s agent mode represents a significant shift in how AI coding assistants work. Instead of just suggesting completions as you type, agent mode can iterate on its own code, catch and fix errors automatically, suggest terminal commands, and even analyze runtime errors to propose fixes.

This isn’t AI-assisted coding anymore. It’s AI-directed coding, where you’re less of a writer and more of an orchestrator. After spending time with this new capability, I have thoughts on what it delivers, where it falls short, and how to use it effectively.

The 32% Problem: Why Most Engineering Orgs Are Flying Blind on AI Governance

Here’s a statistic that should concern every engineering leader: only 32% of organizations have formal AI governance policies for their engineering teams. Another 41% rely on informal guidelines, and 27% have no governance at all.

Meanwhile, 91% of engineering leaders report that AI has improved developer velocity and code quality. But here’s the kicker: only 25% of them have actual data to support that claim.

We’re flying blind. Most organizations have adopted AI tools without the instrumentation to know whether they’re helping or hurting, and without the policies to manage the risks they introduce.

The AI Productivity Paradox: Why Experienced Developers Are Slowing Down

There’s something strange happening in software development right now, and I think we need to talk about it.

Recent research has surfaced a troubling finding: experienced developers working on complex systems are actually 19% slower when using AI coding tools—despite perceiving themselves as working faster. This isn’t a minor discrepancy. It’s a fundamental disconnect between how productive we feel and how productive we actually are.

As someone who’s been experimenting with AI tools extensively (and writing about the results), this finding resonates with my experience. Let me break down what’s happening and what it means for engineering teams.

Transforming Sales Outreach: Using Moltbot as Your AI-Powered SDR

If you’ve been following the AI space lately, you’ve probably heard about Moltbot (also known as OpenClaw)—the open-source AI assistant that skyrocketed to 69,000 GitHub stars in just one month. While most people are using it for personal productivity tasks, there’s a more intriguing use case worth exploring: setting up Moltbot as an automated Sales Development Representative (SDR) for companies.

This post explores how this approach could work, including the setup process, the potential benefits, and yes, the limitations you need to understand before diving in.

AI Agents and Google Slides: When Promise Meets Reality

I’ve been experimenting with AI agents to help create Google Slides presentations, and I’ve discovered something interesting: they’re great at the planning and ideation phase, but they completely fall apart when it comes to actually delivering on their promises.

The Promising Start

I’ve had genuinely great success using ChatGPT to help with presentation planning. I’ll start a conversation about my presentation topic, share the core material I want to cover, and ChatGPT does an excellent job of:

When AI Assistants Fail: The Meeting Scheduling Reality Check

I recently tried to use AI assistants to solve what should be a straightforward problem: scheduling a meeting with three other people at my office. We’re all Google Workspace users, so I figured this would be a perfect use case for AI—especially given all the hype about AI assistants being able to handle calendar management and scheduling.

Spoiler alert: both ChatGPT and Gemini failed spectacularly.

The ChatGPT Experience

I started with ChatGPT, thinking it would be able to help coordinate schedules. My request was simple: find a time that works for me and three colleagues for a meeting.

Categories

Tags

- 100pounds

- 2020

- Acp

- Adblock-Plus

- Adoption

- Agentic

- Agents

- Agile

- Ai

- Ai-Agents

- Amazon

- Apache

- Apple

- Architecture

- Audit

- Authorize-Net

- Automation

- Azure

- Benchmarks

- Bing

- Bingbot

- Blog

- Book-Reviews

- Books

- Burnout

- Business-Tools

- Cache

- Career

- Chatgpt

- Chrome

- Cicd

- Claude

- Cloudflare

- Code-Quality

- Code-Review

- Codex

- Coding

- Coding-Agents

- Compass

- Conversion

- Copilot

- Css

- Culture

- Cursor

- Cve

- Deployment

- Design-Patterns

- Developer-Experience

- Developer-Tools

- Developer-Velocity

- Development

- Disqus

- Docker

- Documentation

- Enterprise

- Fine-Tuning

- Firebase

- Firefox

- Full-Stack

- Future-of-Work

- Gemini

- Genesis-Framework

- Getting-Started

- Ghost-Tag

- Github

- Github-Copilot

- Githubpages

- Google-Slides

- Google-Workspace

- Governance

- Helper

- Hiring

- How-Not-To

- How-To

- Html

- Hugo

- Ide

- Infrastructure

- Integration

- Internet-Explorer

- Interviews

- Iphone-6

- Javascript

- Jekyll

- Jetbrains

- Jquery

- Junior-Developers

- Knowledge-Management

- Laravel

- Leadership

- Legal

- Lessons-Learned

- Llms

- Local-First

- MacOS

- Magento

- Magento 2

- Magento2

- Management

- Mcp

- Meetings

- Mental-Health

- Mentorship

- Metr

- Metrics

- Microsoft

- Models

- Moltbot

- Multi-Agent

- Mysql

- Netlify

- Nginx

- Nist

- Nodejs

- Open-Source

- Openai

- Openclaw

- Orchestration

- OSX

- Parallelization

- Performance

- Personal

- Php

- Policy

- Presentations

- Process

- Product-Development

- Productivity

- Programming

- Prompt-Injection

- Protocols

- Pull-Requests

- Python

- Quality

- Rant

- Remote-Work

- Replit

- Research

- Responsive-Web-Design

- Retrospective

- Roi

- Safari

- Sales

- Scrum

- Security

- Senior-Engineers

- Series

- Sitecatalyst

- Sota

- Sql

- Sql-Server

- Standards

- Tasks

- Teams

- Technical-Debt

- Testing

- Tier-Pricing

- Tips

- Tmobile

- Tools

- Trust

- Unittest

- Ux

- Validation

- Varnish

- Vercel

- Verification

- Vibe-Coding

- Visual-Studio

- Vs-Code

- Web-Development

- Windows-7

- Windows-Vista

- Woocommerce

- Wordpress

- Workflow

- Workflows

- Xml