MCP: The Integration Standard That Quietly Became Mandatory

- 4 minutes - Mar 6, 2026

- #ai#mcp#integration#developer-tools#enterprise

If you were paying attention to AI tooling in late 2024, you heard about the Model Context Protocol (MCP). If you weren’t, you may have missed the quiet transition from “Anthropic’s new open standard” to “the de facto integration layer for AI agents.” By early 2026, MCP has 70+ client applications, 10,000+ active servers, 97+ million monthly SDK downloads, and—in December 2025—moved to governance under the Agentic AI Foundation under the Linux Foundation. Anthropic, OpenAI, Google, Microsoft, and Amazon have all adopted it.

This is the infrastructure story that got overshadowed by the more dramatic AI headlines. It’s worth understanding, especially if you’re an engineering leader deciding how your team’s AI tools should connect to your existing systems.

The Problem MCP Solves

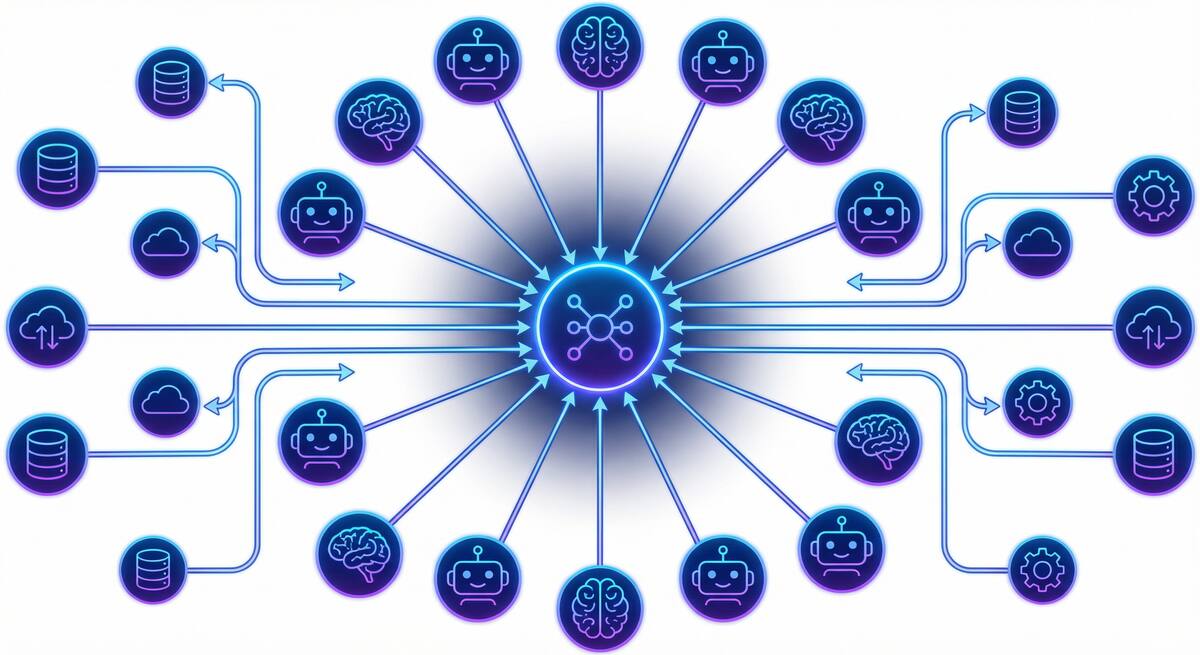

Every AI tool that wants to access enterprise systems faces the same challenge: how do you connect an AI model to a Jira board, a Postgres database, a Kubernetes cluster, a Slack workspace, or an internal API? Before MCP, the answer was custom connectors—N models times M systems equals a bespoke integration for every combination. Teams that wanted Cursor to access their internal documentation had to build something bespoke. Teams that switched AI tools had to rebuild their integrations.

MCP solves the N×M problem by creating a standard protocol. An AI client (Cursor, Claude Code, Copilot, your own agent) connects once to the MCP layer, and any MCP server—whether it exposes a database, an API, a file system, or a custom internal tool—is automatically accessible. Build the server once, use it with any MCP-compatible client.

According to CData’s 2026 State of AI Data Connectivity Report, 71% of AI teams spend more than a quarter of their implementation time on data integration alone. MCP is the infrastructure response to that problem.

What’s Actually Available Right Now

The ecosystem has moved fast:

Docker MCP Toolkit and Catalog: Docker released a containerized registry of pre-built MCP servers with secrets management and no language runtime installation required. You get MCP servers for common tools packaged in containers, deployable on existing infrastructure.

Kong MCP Registry: Kong’s technical preview provides centralized service discovery for AI agents across enterprise systems, with observability and cost tracking—the API gateway model applied to MCP.

Docker MCP Gateway: An open-source proxy that acts as a centralized frontend for MCP servers, handling routing, authentication, and translation. Useful if you want a single governance point for all MCP traffic.

SDK coverage: TypeScript, Python, Go, Kotlin, Java, C#, Swift, Rust, Ruby, and PHP. If your internal systems have an API, you can write an MCP server for them in your team’s language of choice.

GitHub MCP Enterprise Allow Lists (in preview): GitHub’s agent control plane includes MCP governance, which will let enterprise admins specify which MCP servers Copilot agents can connect to.

Why Engineering Leaders Need to Care Now

The practical reality: if your developers are using Cursor, Claude Code, or Copilot, those tools either already support MCP or are adding support. When your developers say “I connected my AI tool to our Jira” or “I gave the agent access to our internal API docs,” they are likely using MCP—whether or not they use that term. If you don’t have a policy about which MCP servers your AI tools can access, your team’s AI agents are making that decision on their own.

This is not abstract. MCP servers have access to whatever the underlying system exposes. An MCP server connected to your production database that an AI agent can query is a real attack surface. Prompt injection attacks—where malicious content in a data source causes an agent to take unintended actions—are more dangerous when the agent has MCP-connected tools at its disposal.

The questions to ask your team:

- Which AI tools do we use that support MCP, and which MCP servers are they configured with?

- Do we have internal MCP servers, and if so, what systems do they expose?

- Who can add MCP servers to a developer’s environment, and is that tracked anywhere?

- Are we prepared for GitHub’s MCP enterprise allow list feature when it goes GA?

The Upside

MCP isn’t just a governance problem—it’s a genuine leverage point. Teams that build internal MCP servers for their proprietary systems (internal APIs, knowledge bases, custom tooling) give their AI tools access to organization-specific context that generic off-the-shelf tools lack. That’s a meaningful answer to the “AI doesn’t know our codebase” problem, and it’s composable: you build the server once and every MCP-compatible tool in your stack can use it.

The teams that understand MCP now are building leverage. The teams that ignore it will spend the next 18 months doing emergency cleanup when their AI tools turn out to have had broad access to systems they didn’t intend.

MCP quietly became mandatory. The question is whether you’re governing it or not.