GitHub Copilot Goes Fully Agentic in JetBrains: Hooks, MCP, and Instruction Files

- 2 minutes - Mar 28, 2026

- #ai#github#copilot#jetbrains#mcp

In mid-March 2026, GitHub promoted a major bundle of Copilot agentic capabilities to general availability in JetBrains IDEs, moving key features out of preview for day-to-day use.

The changelog reads like a checklist of what “serious agentic IDE support” now means:

- Custom agents and sub-agents, plus a planning-oriented agent workflow for breaking down complex work

- Agent hooks in public preview, so teams can run custom commands at defined points in an agent session

- MCP auto-approve at server and tool granularity to reduce approval friction when policies allow it

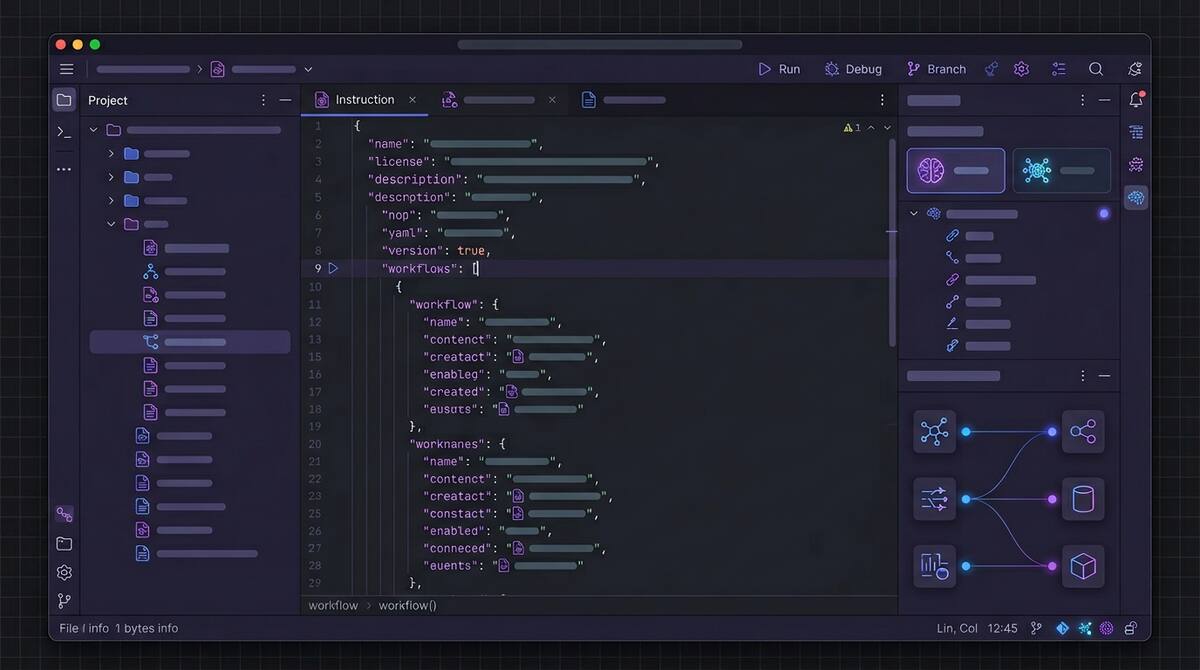

- Automatic discovery of

AGENTS.mdandCLAUDE.mdinstruction files during agent sessions - Auto model selection generally available, with Copilot choosing models based on availability and performance

- An extended reasoning experience for models that expose more explicit thinking, such as Codex-class workflows

Why JetBrains Users Should Care

JetBrains IDEs are where many teams live for deep language support, refactoring, and navigation. Agent features only matter if they meet developers in that workflow, not as a separate tool they resent switching to.

GA here signals GitHub believes the integration is stable enough to be a default expectation, not an experiment.

Hooks Are the Quiet Power Feature

Hooks are easy to underestimate. They are how organizations turn generic agent behavior into org-specific guardrails:

- run policy checks before tool use

- inject logging after actions

- block or warn on sensitive paths

- attach internal metadata for audit trails

If your company is serious about agents, hooks are often the bridge between “cool demo” and “allowed in production repos.”

Instruction Files Are Becoming Standard

Support for AGENTS.md and CLAUDE.md discovery is another step toward repo-native agent configuration. The repo becomes the source of truth for how agents should behave, not a pile of private chat prompts.

That is good for consistency and onboarding. It also means those files deserve review, versioning, and ownership like any other critical config.

MCP Auto-Approve Needs Policy, Not Optimism

MCP auto-approve can remove friction, but friction is sometimes the security model. Teams should pair it with:

- least-privilege MCP servers

- clear allow lists

- logging and traceability

GitHub’s own agent logging improvements elsewhere in March are part of the same puzzle: speed and control have to be designed together.

The Bottom Line

Copilot’s JetBrains GA is not just feature expansion. It is a statement that agentic workflows are becoming baseline IDE functionality for a large slice of the market.

If you lead an engineering org, the question is no longer whether JetBrains shops will use agents. It is whether your standards for hooks, instruction files, and MCP permissions are ready for that reality.